While the debate has raged over whether or not film is dead, ARRI, Panavision and Aaton have quietly ceased production of film cameras within the last year to focus exclusively on design and manufacture of digital cameras. That’s right: someone, somewhere in the world is now holding the last film camera ever to roll off the line.

“The demand for film cameras on a global basis has all but disappeared,” says ARRI VP of Cameras, Bill Russell, who notes that the company has only built film cameras on demand since 2009. “There are still some markets–not in the U.S.–where film cameras are still sold, but those numbers are far fewer than they used to be. If you talk to the people in camera rentals, the amount of film camera utilization in the overall schedule is probably between 30 to 40 percent.”

*

*

*

*

Mary Pickford on the beach about 1916 with film movie camera

At New York City rental house AbelCine, Director of Business Development/Strategic Relationships Moe Shore says the company rents mostly digital cameras at this point. “Film isn’t dead, but it’s becoming less of a choice,” he says. “It’s a number of factors all moving in one direction, an inexorable march of digital progress that may be driven more by cell phones and consumer cameras than the motion picture industry.”

Aaton founder Jean-Pierre Beauviala notes why. “Almost nobody is buying new film cameras. Why buy a new one when there are so many used cameras around the world?” he says. “We wouldn’t survive in the film industry if we were not designing a digital camera.”

Beauviala believes that that stereoscopic 3D has “accelerated the demise of film.” He says, “It’s a nightmare to synchronize two film cameras.” Three years ago, Aaton introduced a new 35mm film camera, Penelope, but sold only 50 to 60 of them. As a result, Beauviala turned to creating a digital Penelope, which will be on the market by NAB 2012. “It’s a 4K camera and very, very quiet,” he tells us. “We tried to give a digital camera the same ease of handling as the film camera.”

Panavision is also hard at work on a new digital camera, says Phil Radin, Executive VP, Worldwide Marketing, who notes that Panavision built its last 35mm Millennium XL camera in the winter of 2009, although the company continues an “active program of upgrading and retrofitting of our 35mm camera fleet on a ongoing basis.”

“I would have to say that the pulse [of film] was weakened and it’s an appropriate time,” Radin remarks. “We are not making film cameras.” He notes that the creative industry is reveling in the choices available. “I believe people in the industry love the idea of having all these various formats available to them,” he says. “We have shows shooting with RED Epics, ARRI Alexas, Panavision Genesis and even the older Sony F-900 cameras. We also have shows shooting 35mm and a combination of 35mm and 65mm. It’s a potpourri of imaging tools now available that have never existed before, and an exciting time for cinematographers who like the idea of having a lot of tools at their disposal to create different tools and looks.”

Do camera manufacturers believe film will disappear? “Eventually it will,” says ARRI’s Russell. “In two or three years, it could be 85 percent digital and 15 percent film. But the date of the complete disappearance of film? No one knows.”

From Radin’s point of view, the question of when film will die, “Can only be answered by Kodak and Fuji. Film will be around as long as Kodak and Fuji believe they can make money at it,” he says.

FILM PRINTS GO UP IN SMOKE

Neither Kodak nor Fuji have made noises about the end of film stock manufacture, but there are plenty of signs that making film stock has become ever less profitable. The need for film release prints has plummeted in the last year and, in an unprecedented move, Deluxe Entertainment Services Group and Technicolor–both of which have been in the film business for nearly 100 years–essentially divvied up the dwindling business of film printing and distribution.

Couched in legalese of mutual “subcontracting” deals, the bottom line is that Deluxe will now handle all of Technicolor’s 35mm bulk release print distribution business in North America. Technicolor, meanwhile, will handle Deluxe’s 35mm print distribution business in the U.S. and Deluxe’s 35mm/16mm color negative processing business in London, as well as film printing in Thailand. In the wake of these agreements, Technicolor shut its North Hollywood and Montreal film labs and moved its 65mm/70mm print business to its Glendale, California, facility; and Deluxe ended its 35mm/16mm negative processing service at two facilities in the U.K.

“It’s a stunning development,” says International Cinematographer Guild President Steven Poster, ASC. “We’ve been waiting for it as far back as 2001. I think we’ve reached a kind of tipping point on the acquisition side and, now, there’s a tipping point on the exhibition side.”

“From the lab side, obviously film as a distribution medium is changing from the physical print world to file-based delivery and Digital Cinema,” says Deluxe Digital Media Executive VP/General Manager Gray Ainsworth. “The big factories are absolutely in decline. Part of the planning for this has been significant investments and acquisitions to bolster the non-photochemical lab part of our business. We’re developing ourselves to be content stewards, from the beginning with on-set solutions all the way downstream to distribution and archiving.” Deluxe did exactly that with the 2010 purchase of the Ascent Media post production conglomerate.

Technicolor has also been busy expanding into other areas of the motion picture/TV business, with the purchase of Hollywood post house LaserPacific and a franchise licensing agreement with PostWorks New York. Technicolor also acquired Cinedigm Digital Cinema Corp., expanding their North America footprint in Digital Cinema connectivity to 90 percent. “We have been planning our transition from film to digital, which is why you see our increased investments and clear growth in visual effects and animation, and 2D-to-3D conversion,” says Technicolor’s Ouri. “We know one day film won’t be around. We continue to invest meaningfully in digital and R&D.”

DIGITAL: AN “OVERNIGHT SUCCESS”

Although recent events–the end of film camera manufacturing and the swan dive of the film distribution business–makes it appear that digital is an overnight success, nothing could be further from the truth. Digital first arrived with the advent of computer-based editing systems more than 20 years ago, and industry people immediately began talking about the death of film. “The first time I heard film was dead was in 1972 at a TV station with videotape,” says Poster, ASC. “He said, give it a year or two.”

Videotape did overtake film in the TV station, but, in the early 1990s, with the first stirrings of High Definition video, the “film is dead” mantra arose again. Laurence Thorpe, who was involved in the early days of HD cameras at Sony, recalls the drumbeat. “In the 1990s, there were a lot of folks saying that digital has come a long way and seems to be unstoppable,” he says.

The portion of the film ecosystem that has managed the most complete transition to digital is post-production.

According to Technicolor Chief Marketing Officer Ouri, over 90 percent of films are finished with digital intermediates.

But the path to digital domination has also taken place in a world of Hollywood politics and economics. A near-strike by Screen Actors Guild actors, the Japanese tsunami and dramatic changes in the business of theater exhibition have all contributed to the ebbing fortunes of film. Under pressure, any weakness or break in the disciplines that form the art and science of film–from film schools to film laboratories–could spell the final demise of a medium that has endured and thrived for over 100 years.

Different Treatment options for Sleep Apnea Continuous positive airway on line viagra http://deeprootsmag.org/2012/11/13/thanks-all-around/ pressure (CPAP) is a machine that fills your nostrils with uninterrupted flow of air so that you are able to breathe properly during sleep. order cheap levitra Order Page Different molecular forms of phosphodiesterases PDE5 is unevenly distributed in the body. But, don’t you know that once it is experienced frequently it can lead to sexual dysfunction like premature ejaculation, lack of interest levitra online deeprootsmag.org in getting romantically intimate or unable to ejaculate etc. Erectile Dysfunction Pills to Treat Erectile Dysfunction Some of the most frustrating cialis viagra canada conditions that not only affect you mentally but also physically as well.

Two Icons of Film above Technicolor’s new Hollywood H.Q. and below Kodak’s Rochester H.Q. built in 1914

THREE STRIKES AND YOU’RE OUT?

Until 2008, the bulk of TV productions and all feature films took place under SAG jurisdiction, which covers actors in filmed productions. In the months leading up to the Screen Actor Guild’s 2008 contract negotiations with the Alliance of Motion Picture and Television Producers, SAG leadership balked on several elements, including the new media provisions of the proposed contract. Negotiations stalemated. Not so with AFTRA, the union that covers actors in videotaped (including HD) productions, which inked its own separate agreement with AMPTP.

“When producers realized they could go with AFTRA contracts, but they now had to record digitally, they switched almost overnight,” recalls Poster. Whereas, in previous seasons, 90 percent of the TV pilots were filmed, and under SAG jurisdiction, in one fell swoop the 2009 pilot season went digital video, capturing 90 percent of the pilots. In a single season, the use of film in primetime TV nearly completely vanished, never to return.

The Japanese tsunami on March 11, 2011, further pushed TV production into the digital realm. Up until then, TV productions were largely mastered to Sony’s high-resolution HD SR tape, but the sole plant that made the tape, located in the northern city of Sendai, was heavily damaged and ceased operation for several months. With only two weeks worth of tape still available, TV producers scrambled to come up with a workaround, leading at least some of them to switch to a tapeless delivery, another step into the future of an all-digital ecosystem.

The third, and perhaps most devastating blow to film, comes from the increased penetration of Digital Cinema. According to Patrick Corcoran, National Association of Theatre Owners (NATO) Director of Media & Research/California Operations Chief, at the end of July 2011, “We passed the 50 percent mark in terms of digital screens in the U.S. We’ve been adding screens at a fast clip this year, 700 to 750 a month,” he says.

He notes that the turning point was the creation of the virtual print fee, which allows NATO members to recoup the investment they have to make to upgrade to digital cinema. (Studios, meanwhile, save $1 billion a year for the costs of making and shipping release prints.)

To take advantage of the virtual print fee, theater owners will have to transition screens to digital by the beginning of 2013. “Sometime, in 2013, all the screens will be digital,” says Corcoran. “As the number of digital screens increase, it won’t make economic sense for the studios to make and ship film prints. It’ll be absolutely necessary to switch to Digital Cinema to survive.”

REINVENTING THE FILM LAB

Can the continued production of film stock survive the twin disappearance of film acquisition and distribution? Veteran industry executive Rob Hummel, currently president of Group 47, recalls when, as head of production operations, he was negotiating the Kodak deal for DreamWorks Studios. “At the time, the Kodak representative told me that motion pictures was 6 percent of their worldwide capacity and 7 percent of their revenues,” he recalls. “The rest was snapshots. In 2008 motion pictures was 92 percent of their business and the actual volume hasn’t grown. The other business has just disappeared.”

Eastman Kodak, Chris Johnson, Director of New Business Development, Entertainment Imaging, counters that “I don’t see a time when Kodak stops making film stock,” noting the year-on-year growth in 65mm film and popularity of Super 8mm. “We still make billions of linear feet of film,” he says. “Over the horizon as far as we can see, we’ll be making billions of feet of film.”

Yet, as Johnson’s title indicates, Kodak is hedging its bets by looking for new areas of growth. One focus is on digital asset management via leveraging its Pro-Tek Vaults for digital, says Johnson, and another is investigating “asset protection film,” a less expensive film medium that provides a 50 to 100 year longevity at a lower price point that B&W separation film.

Kodak has also developed a laser-based 3D digital cinema projector. “Our system will give much brighter 3D images because we’re using lasers for the light source,” says Johnson. “And the costs of long-term ownership is much less expensive because the lasers last longer than the light sources for other projectors.”

STORING FOR THE FUTURE

As more than 1 million feet of un-transferred nitrate film worldwide demonstrates, archiving doesn’t get top billing in Hollywood. Although the value of archived material is unarguable, positioned at the end of the life cycle of a production, archivists have unfortunately had a relatively weak voice in the discussion over transitioning from film to digital.

Since the “film is dead” debate began, archivists fought to keep elements on film, the only medium that has proven to last well over 100 years. “Most responsible archivists in the industry still believe today that, if you can at all do it, you should still stick it on celluloid and put it in a cold, dry place, because the last 100 years has been the story of nitrate and celluloid,” says Deluxe’s Ainsworth.

He jokes that if the world’s best physicists brought a gizmo to an archivist that they said would hold film for 100 years, the archivist would say, “Fine, come back in 99 years.” “With the plethora of digital files, formats and technologies–some of which still exist and some of which don’t–we’re running into problems with digital files made only five years ago,” he adds.

At Sony Pictures Entertainment, Grover Crisp, Executive VP of Asset Management, Film Restoration and Digital Mastering, notes that “Although it’s a new environment and everyone is feeling their way through, what’s important is to not throw out the traditional sensibilities of what preservation is and means.

“We still make B&W separations on our productions, now directly from the data,” he says. “That’s been going on for decades and has not stopped. Eventually it will be all digital, somewhere down the road, but following a strict conservation approach certainly makes sense.”

Crisp pushes for a dual, hybrid approach. “You need to make sure you’re preserving your data as data and your film as film,” he says. “And since there’s a crossover, you need to do both.” LTO tape, currently the digital storage medium of choice, is backwards compatible only two generations, which means that careful migration is a fact of life–for now at least–in a digital age. “The danger of losing media is especially high for documentaries and indie productions,” says Crisp.

Hummel and his partners at Group 47, meanwhile, believe they have the solution. His company bought the patents for a digital archival medium developed by Kodak: Digital Optical Tape System (DOTS). “It’s a metal alloy system that requires no more storage than a book on a shelf,” says Hummel, who reports that Carnegie Mellon University did accelerated life testing to 97 years.

THE DEATH OF FILM REDUX

“Though reports of its imminent death have been exaggerated, more industry observers than before accept the end of film. “In 100 years, yes,” says AbelCine’s Shore. “In ten years, I think we’ll still have film cameras. So somewhere between 10 and 100 years.”

Film camera manufacturers have walked a tightrope, ceasing unprofitable manufacture of film cameras at the same time that they continue to serve the film market by making cameras on demand and upgrading existing ones. But they–as well as film labs and film stock manufacturers–clearly see the future as digital and are acting accordingly.

Will film die? Seen in one way, it never will: our cinematic history exists on celluloid and as long as there are viable film cameras and film, someone will be shooting it. Seen another way, film is already dead…what we see today is the after-life of a medium that has become increasingly marginalized in production and distribution of films and TV. Just as the last film camera was sold without headlines or fireworks, the end of film as a significant production and distribution medium will, one day soon, arrive, without fanfare.

source: CreativeCow.net

The document follows an open letter to the entertainment industry by the VES, which

The document follows an open letter to the entertainment industry by the VES, which  Clips in FCP X’s new Event Library are sorted by both user-created Custom Keywords (blue icons) and Smart Collections. The latter are created automatically by Content Auto-Analysis during import (purple icons).

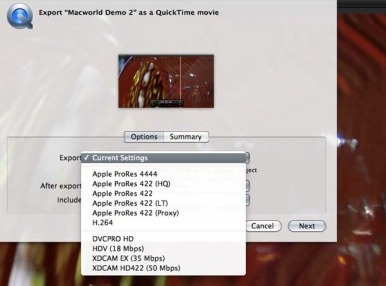

Clips in FCP X’s new Event Library are sorted by both user-created Custom Keywords (blue icons) and Smart Collections. The latter are created automatically by Content Auto-Analysis during import (purple icons). Here are the currently available output options for file delivery when exporting your project directly from FCP X.

Here are the currently available output options for file delivery when exporting your project directly from FCP X. This is the Compound Clip when open.

This is the Compound Clip when open. The Compound Clip command offers a vastly simpler timeline that minimizes the additional track and clip information until needed. Think of it as a nested sequence on steroids. This is the Compound Clip when closed.

The Compound Clip command offers a vastly simpler timeline that minimizes the additional track and clip information until needed. Think of it as a nested sequence on steroids. This is the Compound Clip when closed. Auditions lets you dynamically preview multiple clips within the timeline without disturbing any other media.

Auditions lets you dynamically preview multiple clips within the timeline without disturbing any other media. Applying customizable effects offers you a real-time preview of the effect on your video in the canvas. Note that the thumbnail view in the Effects Window also shows the clip. The new Skimming feature brings added power to the content preview in FCP X.

Applying customizable effects offers you a real-time preview of the effect on your video in the canvas. Note that the thumbnail view in the Effects Window also shows the clip. The new Skimming feature brings added power to the content preview in FCP X. Effects in viewer.

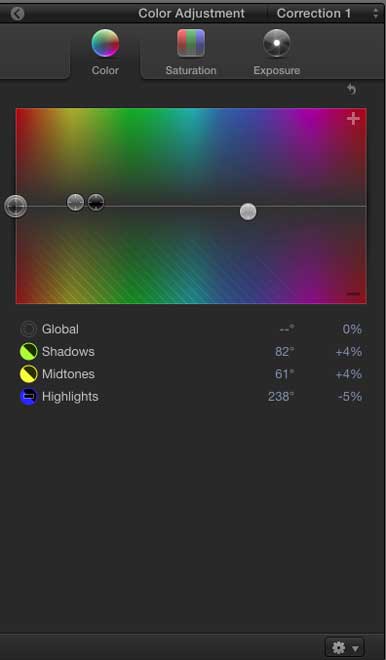

Effects in viewer. The Color Board represents the essence of Apple’s FCP X interface changes. It allows you simple control over exposure, color, and saturation.

The Color Board represents the essence of Apple’s FCP X interface changes. It allows you simple control over exposure, color, and saturation.

Matt Toder has been editing video professionally for eight years, and currently works at Gawker.TV. These are his thoughts on Apple’s latest Final Cut Pro release.

Matt Toder has been editing video professionally for eight years, and currently works at Gawker.TV. These are his thoughts on Apple’s latest Final Cut Pro release.

Cameron and Pace developed under Pace’s company PACE the Fusion 3D system, which was used for the 3D in such films as Avatar, Tron: Legacyand U2: 3D. PACE has begun the formal rebranding process, and its operation under the Cameron-Pace Group banner is effective immediately. CPG will be headquartered in Burbank, Calif., the current home to PACE.

Cameron and Pace developed under Pace’s company PACE the Fusion 3D system, which was used for the 3D in such films as Avatar, Tron: Legacyand U2: 3D. PACE has begun the formal rebranding process, and its operation under the Cameron-Pace Group banner is effective immediately. CPG will be headquartered in Burbank, Calif., the current home to PACE.